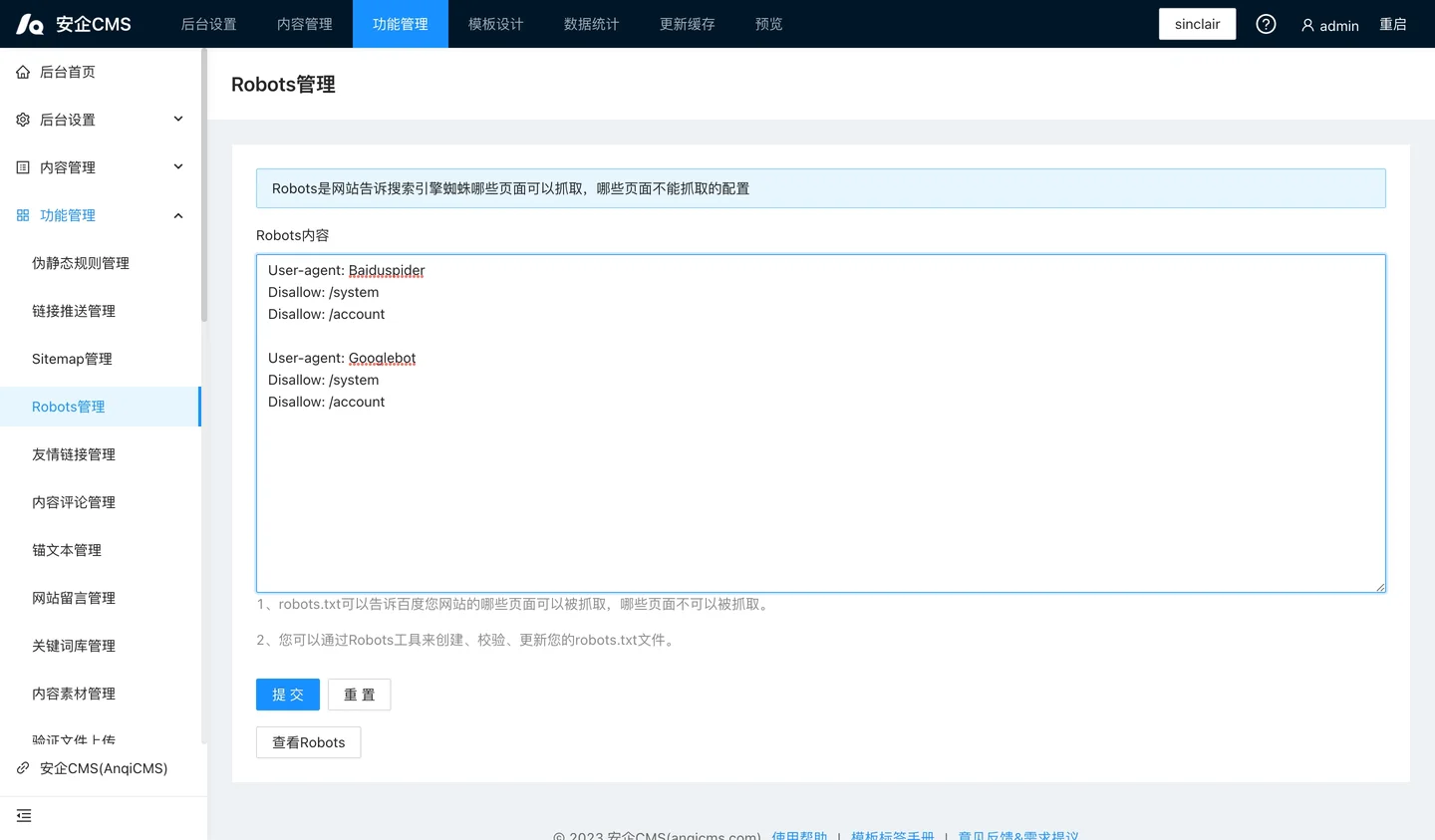

robots.txt is a search engine spider protocol file, its purpose is to inform the search engine spiders which pages of your website can be crawled and which pages cannot be crawled.robots.txt is a plain text file that follows the robot exclusion standard, consisting of one or more rules, each rule can prohibit or allow specific search engine spiders to crawl the specified file paths under the website.If you do not set it, all files will be allowed to be captured by default.

The robots.txt rules include:

User-agent: The user agent (UA) identifier, you can view it here.

Allow: Allow access to crawling.

Disallow: Prevent access to crawling.

Sitemap: Site map. There is no limit on the number of entries, you can add multiple sitemap links.

# : Comment line.

This is a simple robots.txt file containing two rules.

User-agent: YisouSpider

Disallow: /

User-agent: *

Allow: /

Sitemap: /sitemap.xml

User-agent: BaiduSpider means that the rules for user agents such as "YisouSpider" (a search engine spider of Yisou) can also be set to bingbot (Bing), Googlebot (Google), and so on.The proxy names of other search engine crawlers can be viewed here.

Disallow: / means 'Disallow access to all content'.

The meaning of the line is: rules for all user agents (* is a wildcard).All user agents can crawl the entire website. It does not matter if this rule is not specified; the result is the same;The default behavior is that the user agent can crawl the entire website.

The meaning of the line 'Sitemap:' is: the path to the sitemap file of the website is./sitemap.xml.